Announcing: Humanloop for Large Language Models

Raza Habib

Announcing: Humanloop for Large Language Models

Today we're excited to open up access to Humanloop for all developers who want to build next-generation applications with large language models like GPT-3.

Large language models let you build apps that feel like science fiction. For the first time, we have AI assistants that can help us write better, code more quickly and even produce art. You don't need to be an expert in AI or machine learning to build incredibly applications.

Although it's never been easier to quickly produce an impressive demo, going from a prototype to a full-fledged production app is still challenging. We're building tools to fix that.

LLMs are a new computing platform

Every few decades a new computing platform emerges. A platform that unlocks so much new potential that it enables innovators to build disruptive applications that lead to generation-defining companies. The internet was such a platform and Google, Amazon and Netflix are amongst the companies that emerged. The smartphone was another that dramatically changed the way we live and made huge companies like Uber, WhatsApp and Twitter possible.

We believe that foundational AI models, like GPT-3 or Stable Diffusion, are the start of the next big computing platform. Developers building on top of these models will be able to build a new generation of applications that until recently would have felt like science fiction. We’ve already seen examples of these in the form of intelligent assistants like Jasper AI for writing or Copilot for software but these are just the beginning.

As the model’s capabilities increase we’ll start to see more and more intelligent software. We’ll see genuine competitors to google search, that can reliably give you answers to questions in natural language. We’ll see interactive versions of design tools like Figma, where anyone can simply describe what they want and iterate towards a final design. Traditionally hard to automate tasks like legal or accounting will be disrupted by intelligent assistants. AI models will become commonplace inside all software – you should soon expect your CRM to draft your messages for you and code editor to write your tests.

Demos are easy but products are hard

We've worked closely with OpenAI and some of the earliest adopters of GPT-3 to understand the challenges faced when working with this powerful new technology. Repeteadly we heard that prototyping was easy but getting to production was hard. Evaluation is subjective and diffcult. Prompt engineering was more art then science. Models hallucinate and are hard to customise.

To unlock the potential of LLM applications we need a new set of tools built from from first principles. Here’s how we’re helping to overcome some of the biggest pain points we’ve seen:

We need the right tools for a new platform

1. Learn directly from user feedback

Evaluating generative models is hard because it’s more subjective than traditional machine learning. If you’re doing simple classification you can measure accuracy but if you’re using AI to write copy or answer questions, there isn’t a clear “ground truth”. Instead, it makes sense to learn what your users like and directly optimise for specific user outcomes. Humanloop’s SDK makes this easy; it's a simple drop-in replacement for your call to Open AI’s model that has the added benefit of helping you capture user feedback.

import humanloop as hl

# Humanloop will log the inputs/outputs and the model used

generation, log_ids = hl.generate(

project="topic-writer",

provider="OpenAI",

**model_config

)

# User feedback is captured against that result and that model

hl.feedback(log_id=log_id, group="explicit_feedback", label="👍")

Once set up, Humanloop thelps you monitor your application in production, and explore why certain models are working better than others. Measuring performance is the key to being able to improve it.

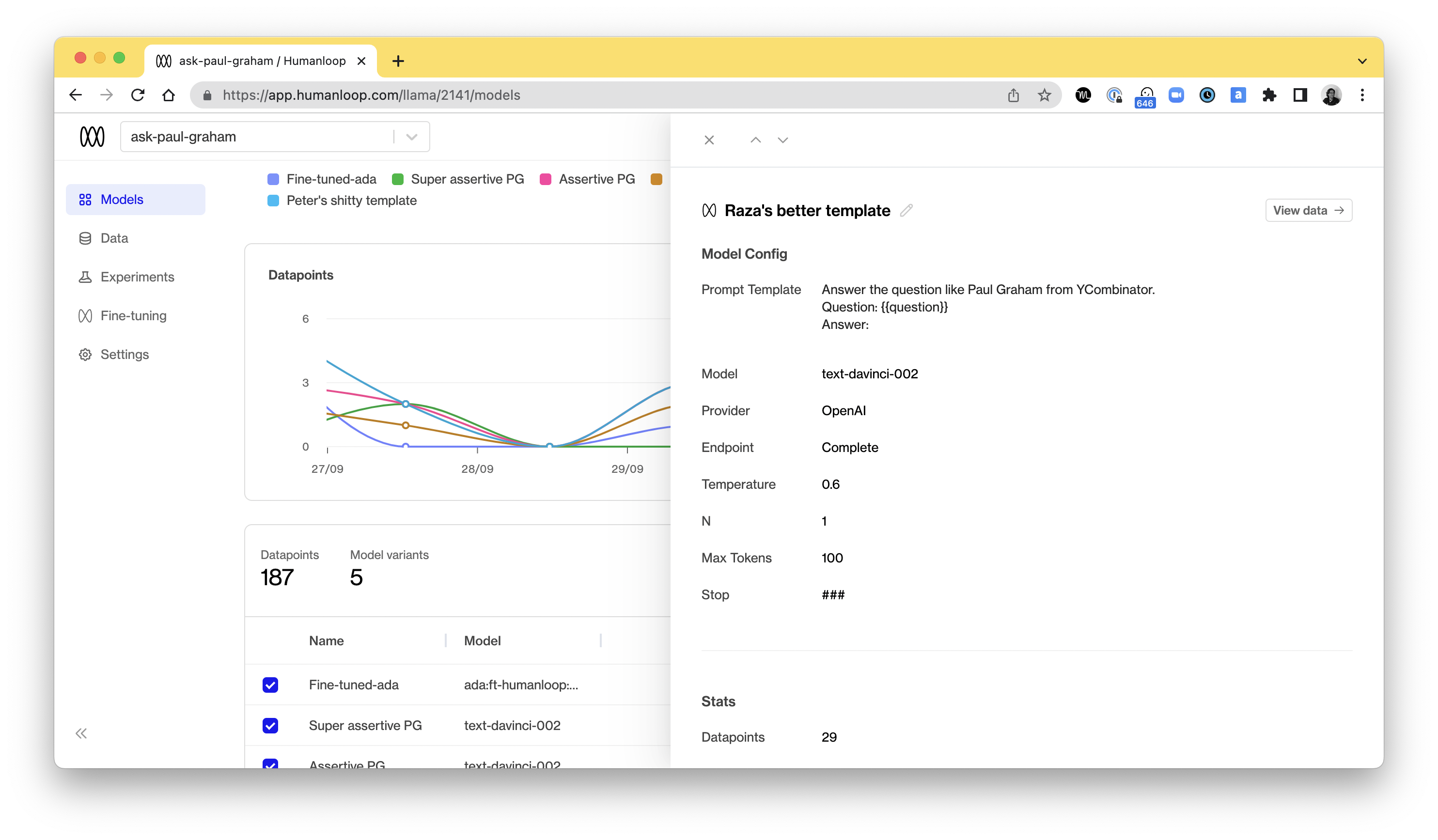

The Humanloop dashboard shows you how your models are performing in production. You can explore the data flowing through your model and the feedback from your users.

2. Experiment with different prompts and models

One of the most exciting things about large language models is that you no longer need large manually annotated datasets. A simple instruction with a few examples, a prompt, is often enough to get good performance. The choice of prompt can have a big impact on how well your app works though and trying to find good prompts was something we saw customers spending a lot of time on.

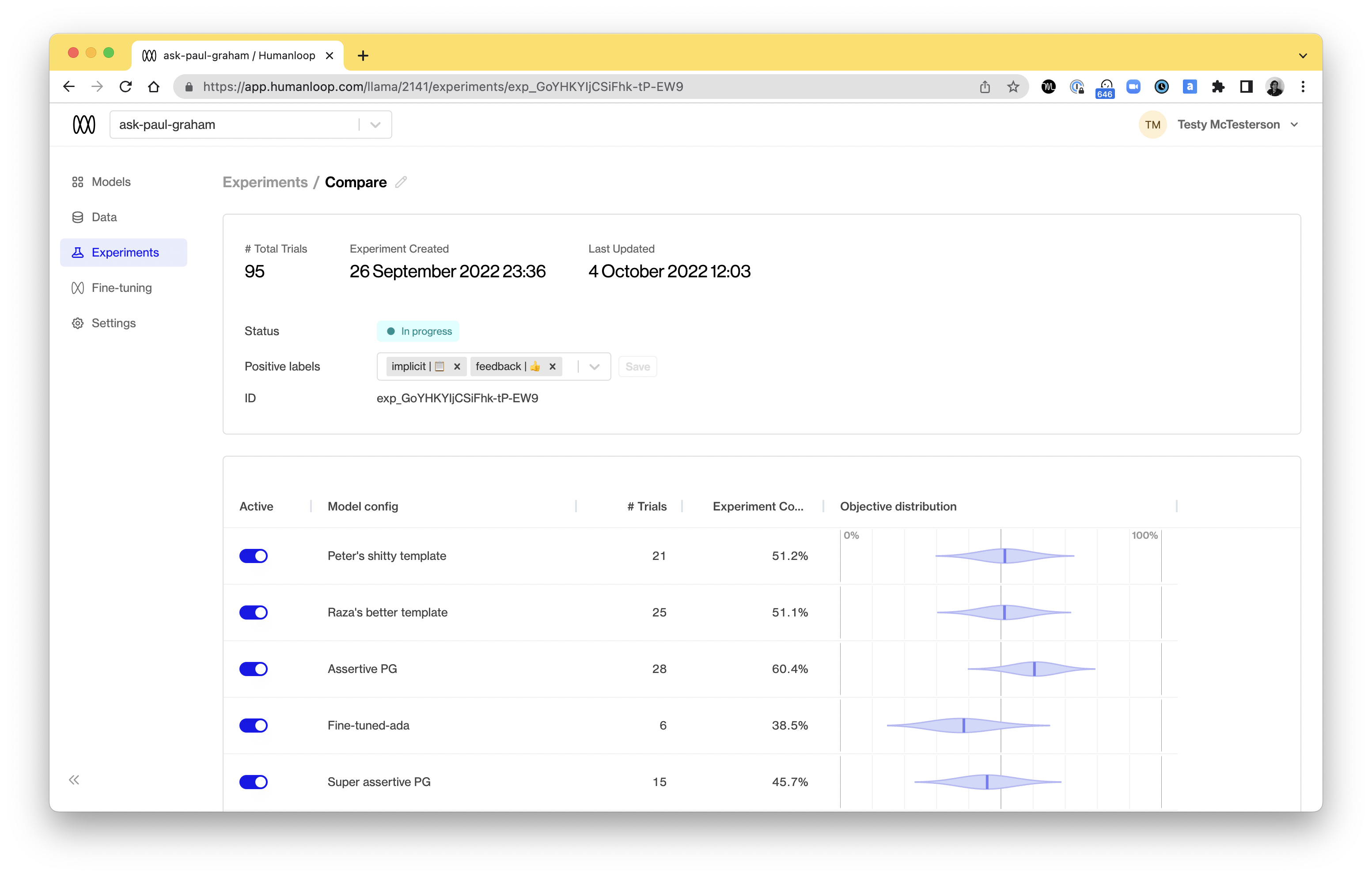

Humanloop takes the guesswork out of “prompt engineering” by letting you run experiments in production. You decide what metric you want to optimise, choose a few models to A/B test and Humanloop will automatically learn which one works best based on user interaction. This lets you improve performance and optimise costs.

Experiments makes prompt engineering a science. Choose the metrics you want to optimise and multi-armed bandit will automatically find the best prompt and model.

3. Finetune custom models

Large language models are trained on huge web-scale datasets but they don’t understand your domain or tone. To get the best performance you need to finetune the base model on your own data. This goes a step beyond prompt engineering and actually adjusts the parameters of your model.

Finetuning offers a lot of potential benefits: finetuned models can be smaller without sacrificing performance and they are both cheaper and faster. However it is challenging to get right. It can be hard to know what data to use, which base models to tune and how to choose the hyperparameters.

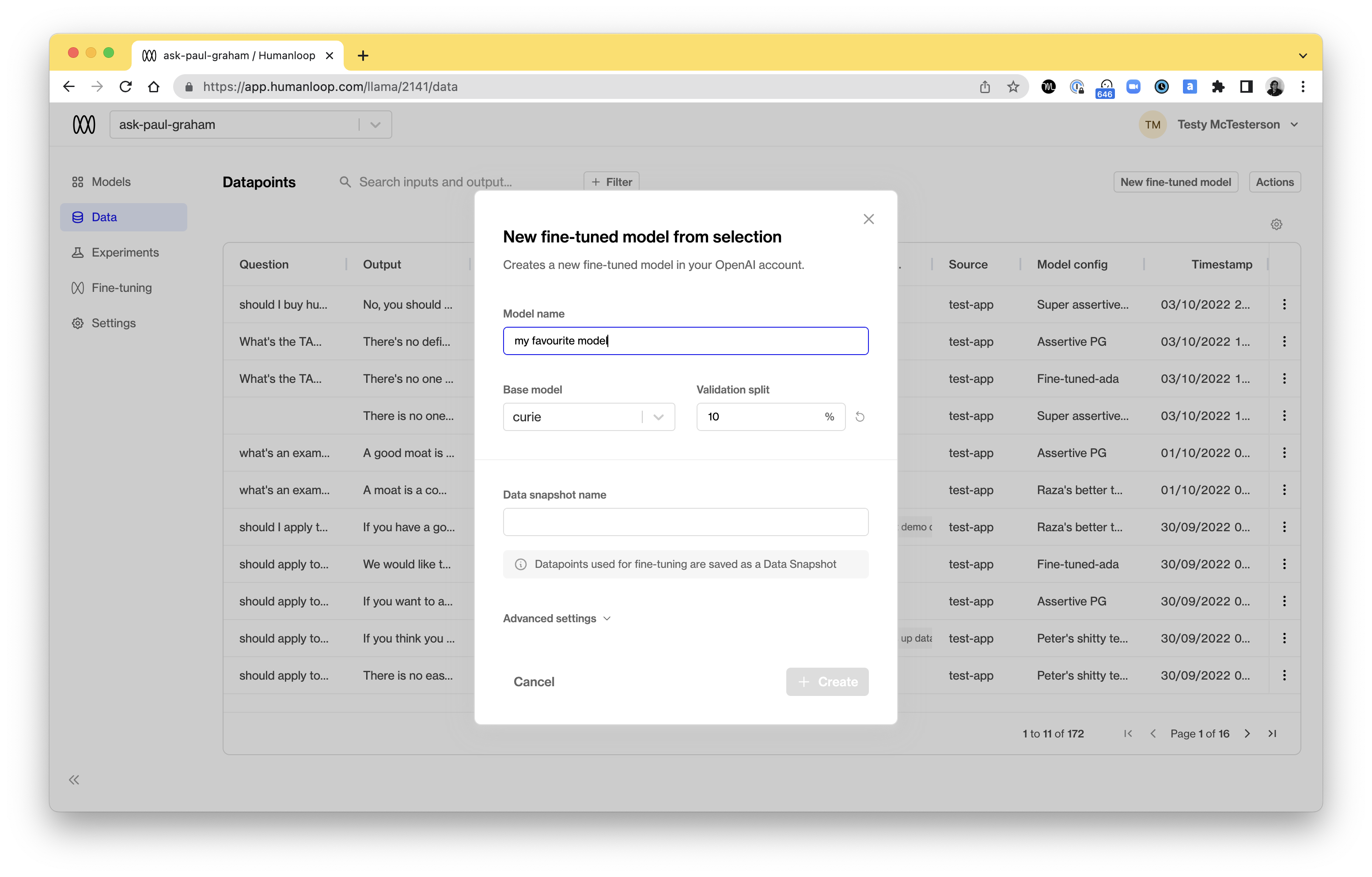

Humanloop allows you to finetune custom models with a single click. You can filter on the evaluation feedback to find the most valuable data and then simply select a base model and we’ll handle the process for you.

You can finetune models with a single click on your best data, helping you build higher performing custom models and a data flywheel for your business.

Build defensible products

Everyone has access to the same base models. If you to build successful products and applications on top of GPT-3 or similar models you have to find a way to add value beyond the models. One way is to embed the models into useful workflows. Another is to use the data you capture to iteratively finetune and improve the models. The user feedback data Humanloop captures allows you to finetune better custom models and the better custom models improve the quality of your data. This data flywheel allows you to build better and better models and run ahead of the competition.

Sign Up for Early Access

LLMs are the next big computing platform. At least as important as the internet, perhaps more. We want to empower the next million developers to build AI-first applications

If you want to build successful products and apps with GPT-3, sign up now.

About the author